Project

Term Project Presentation and Poster Session

- Date: 2024/06/11 (Wednesday)

- Time: 3:30PM- 5:30PM

- Location: Room 106 and 1st Floor, Building 301

Schedule

- 3:20 PM ~ 3:40 PM: Session 1 Presentation (Room 106, Building 301)

- 3:40 PM ~ 4:10 PM: Session 1 Poster Session (1st Floor, Building 301)

- 4:10 PM ~ 4:30 PM: Session 2 Presentation (Room 106, Building 301)

- 4:30 PM ~ 5:00 PM: Session 2 Poster Session (1st Floor, Building 301)

Algorithmic Foundations of RL

RL for Robotics & AI Systems

- Force-Aware Deformable Object Manipulation with Diffusion policy (강승훈) - Best Poster Awards!

1. Algorithmic Foundations of RL

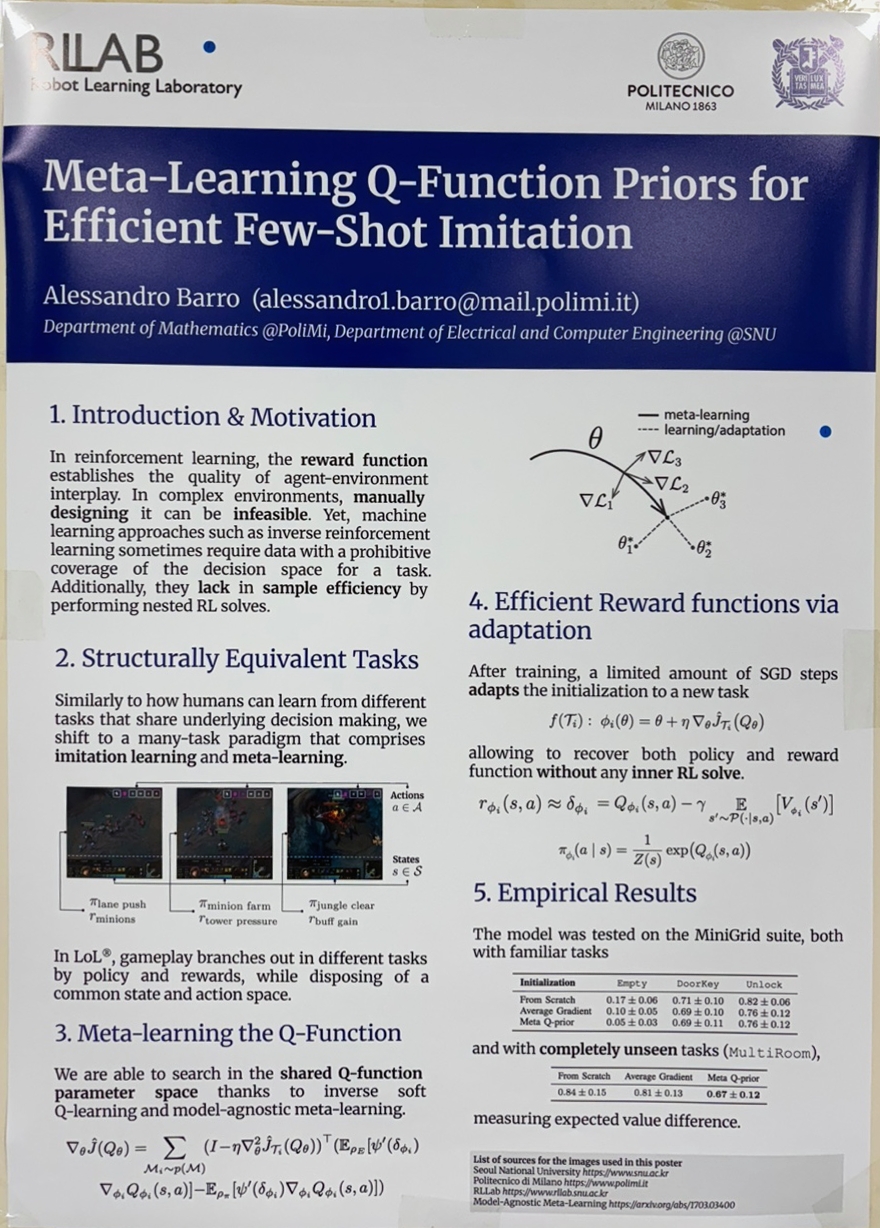

Meta Learning Q-Function Priors for Efficient Few-Shot Imitation (Barro Alessandro)

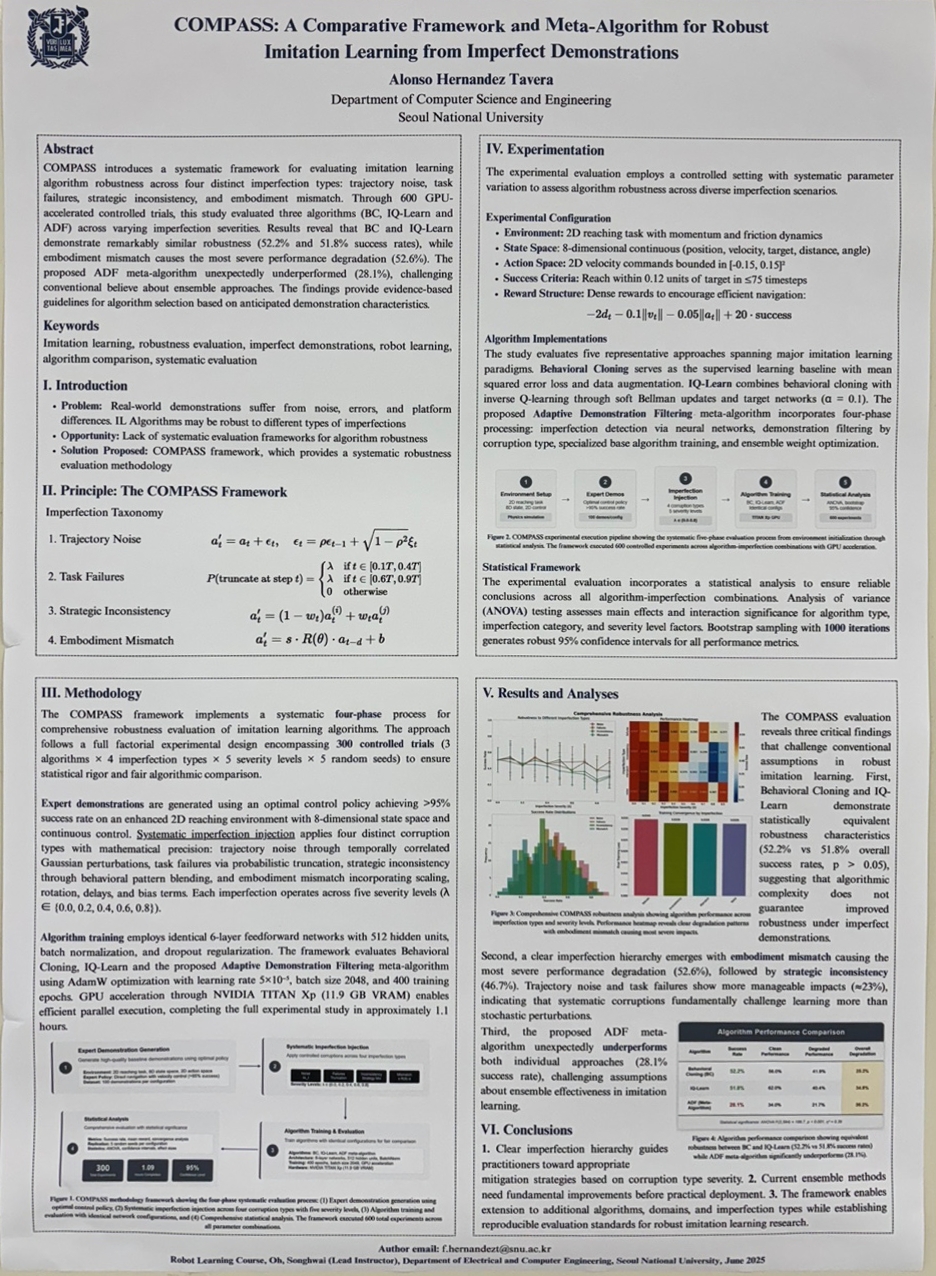

COMPASS: A Comparative Framework and Meta-Algorithm for Robust Imitation Learning from Imperfect Demonstrations (Hernandez Tavera Faiber Alonso)

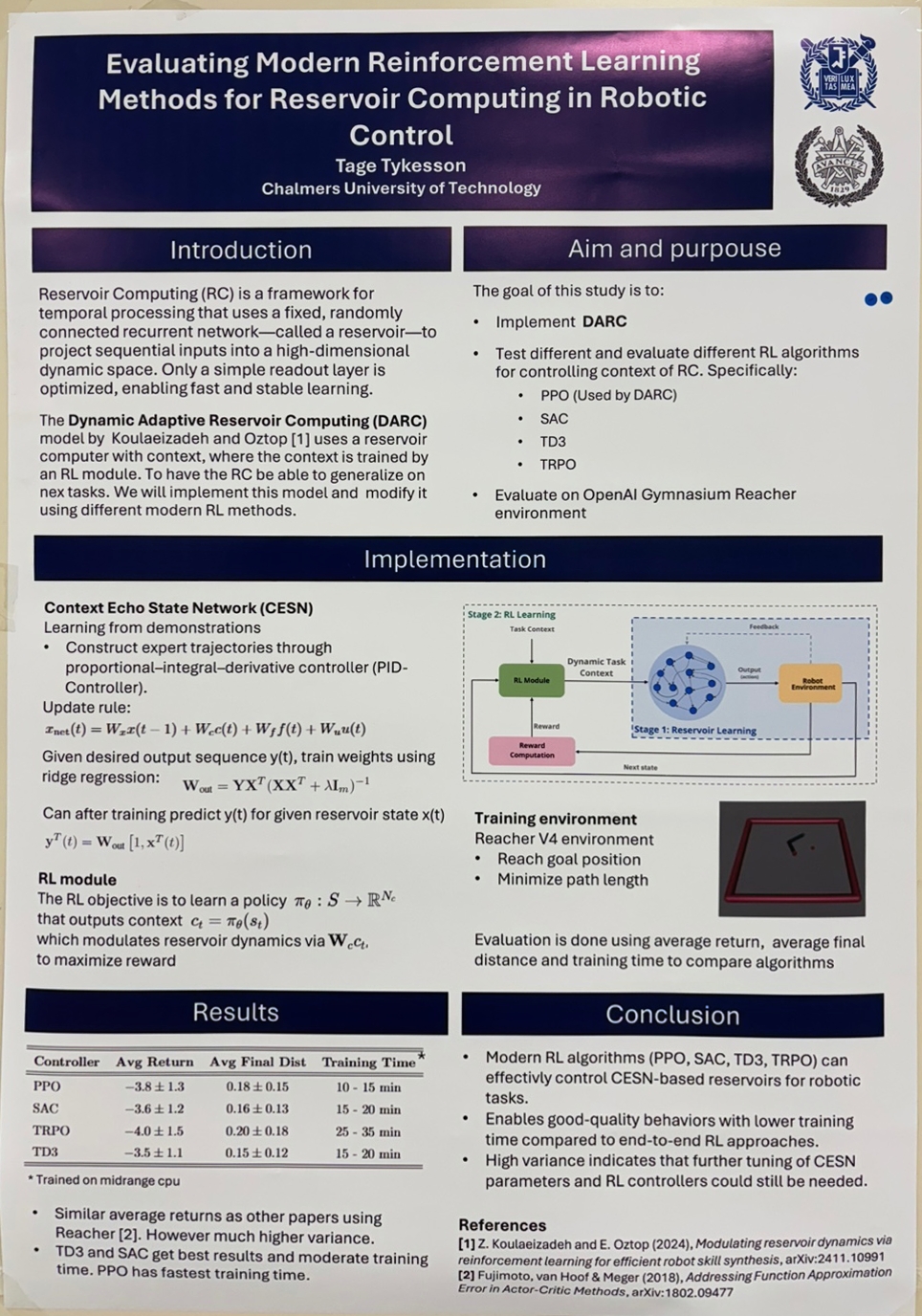

Evaluating Modern Reinforcement Learning Methods for Reservoir Computing in Robotic Control (Tykesson Tage)

fast and stable learning. This architecture is well-suited for tasks with time-dependent dynamics, such as robotic control or chaotic systems. Koulaeizadeh and Oztop previously introduced Dynamic Adaptive Reservoir Computing (DARC), which integrates reinforcement learning with an Echo State Network (ESN) by modulating a task-specific context vector. In this project, we reproduce the original DARC architecture using Proximal Policy Optimization (PPO) and extend it by evaluating alternative RL algorithms, including Soft Actor-Critic (SAC), Trust Region Policy Optimization (TRPO), and Deep Deterministic Policy Gradient (DDPG). Experiments are conducted on the Gymnasium Reacher environment, a continuous control task where the agent must actuate a two-joint arm to reach variable target positions. To evaluate generalization, the reservoir is trained and tested on distinct sets of target positions. Each method is assessed based on final reaching accuracy and sample efficiency. The results show meaningful differences in how the tested

reinforcement learning algorithms interact with the reservoir-based control architecture highlighting the importance of algorithm selection when combining reservoir computing with policy learning and support the broader viability of this hybrid approach for continuous control tasks.

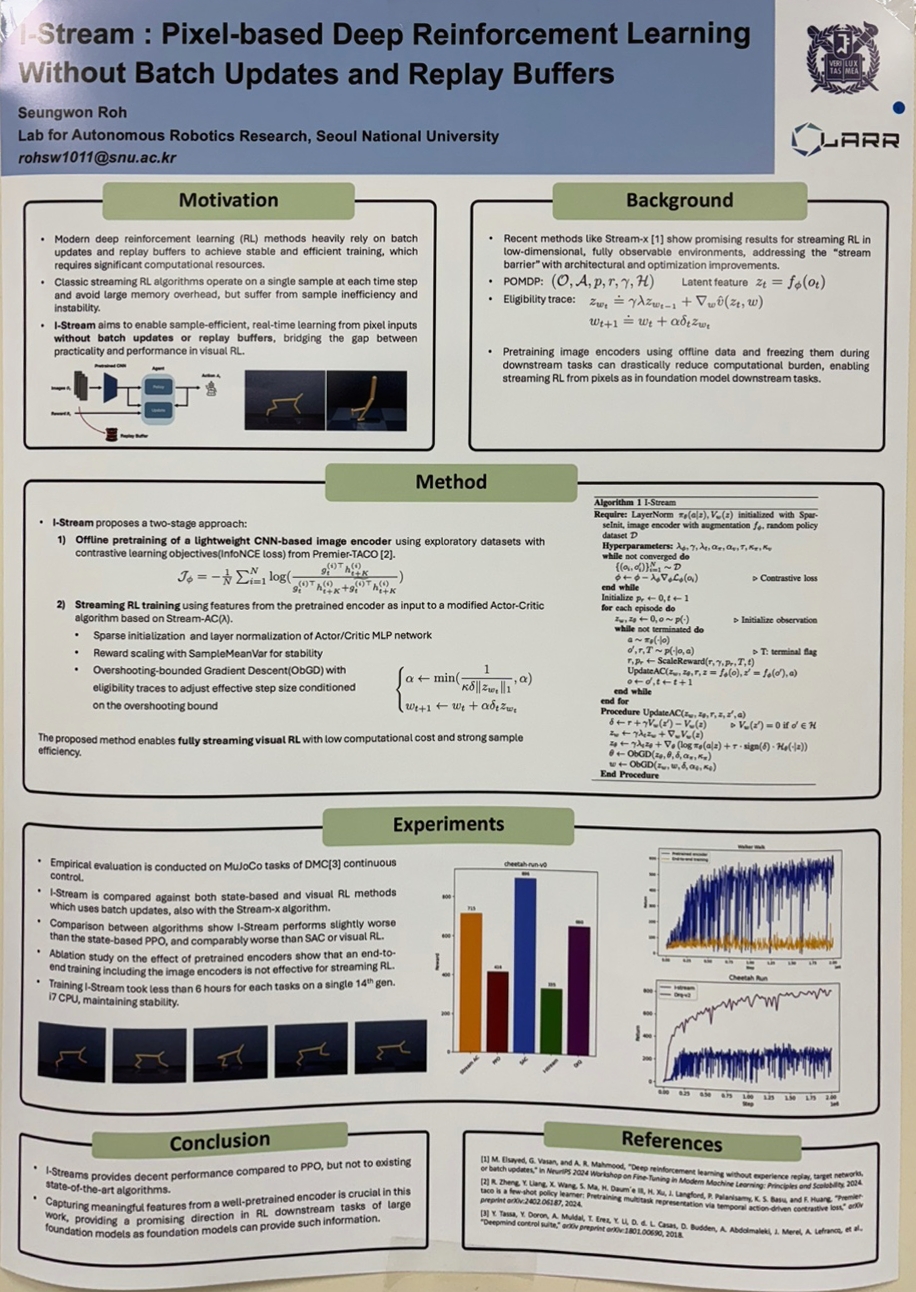

I-Stream : Pixel-based Deep Reinforcement Learning Without Batch Updates and Replay Buffers (노승원)

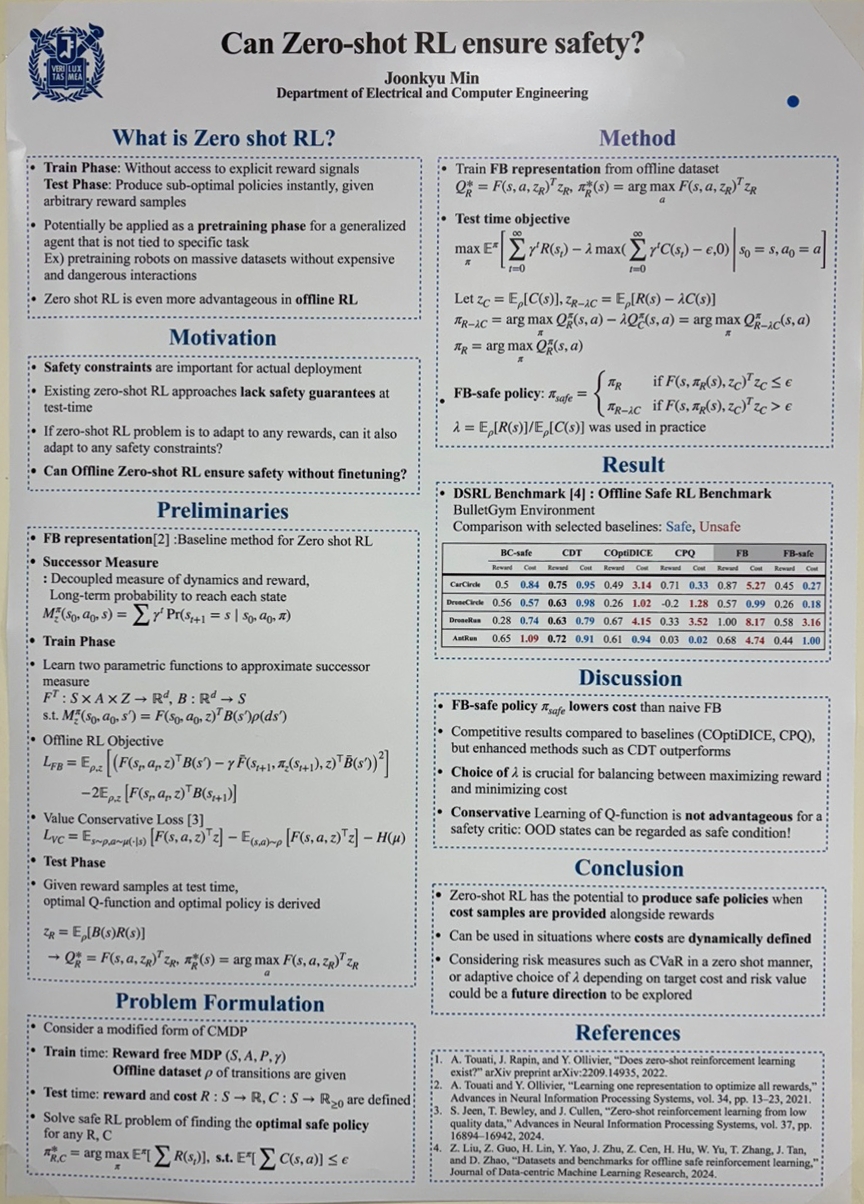

Can Zero-shot RL enable test time safety? (민준규)

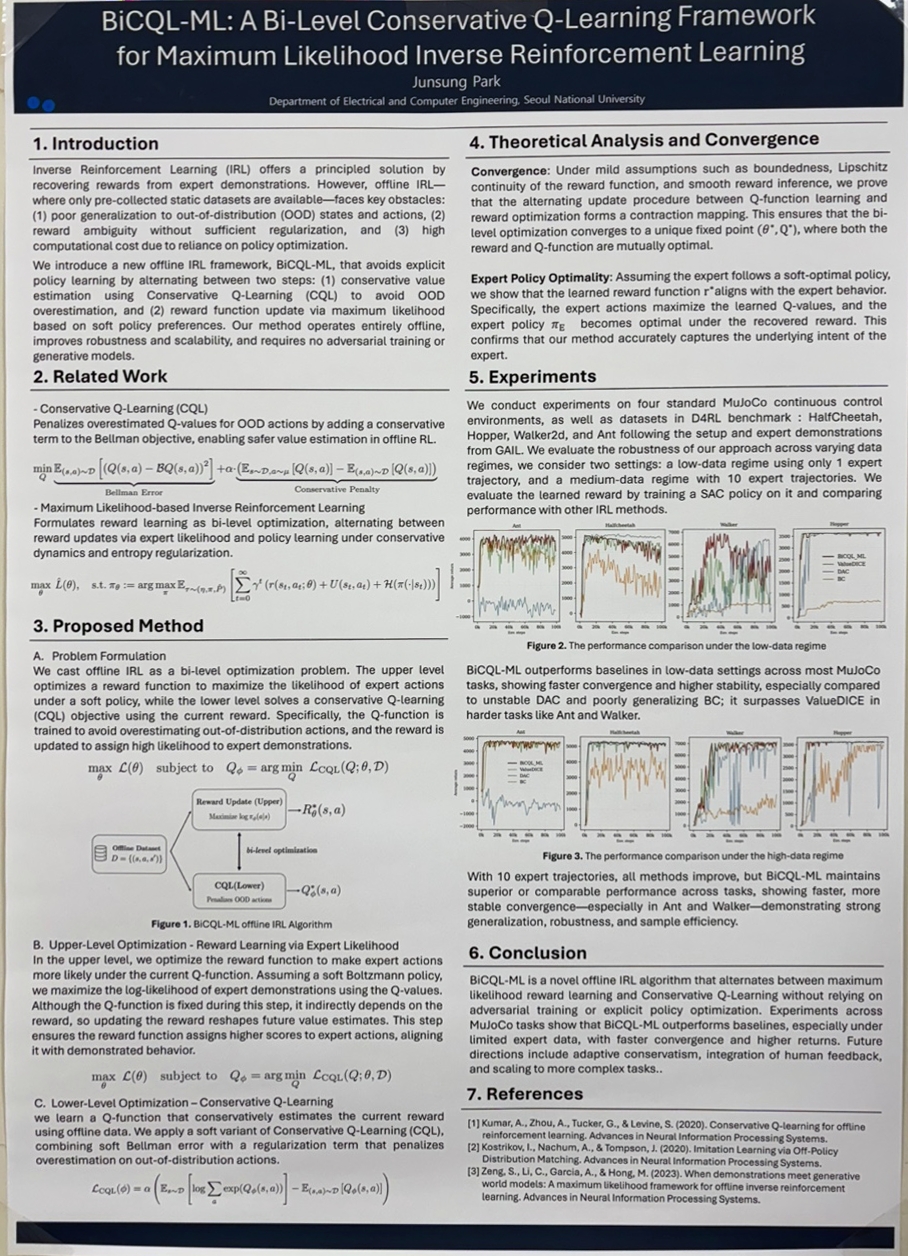

BiCQL-ML: A Bi-Level Conservative Q-Learning Framework for Maximum Likelihood Inverse Reinforcement Learning (박준성)

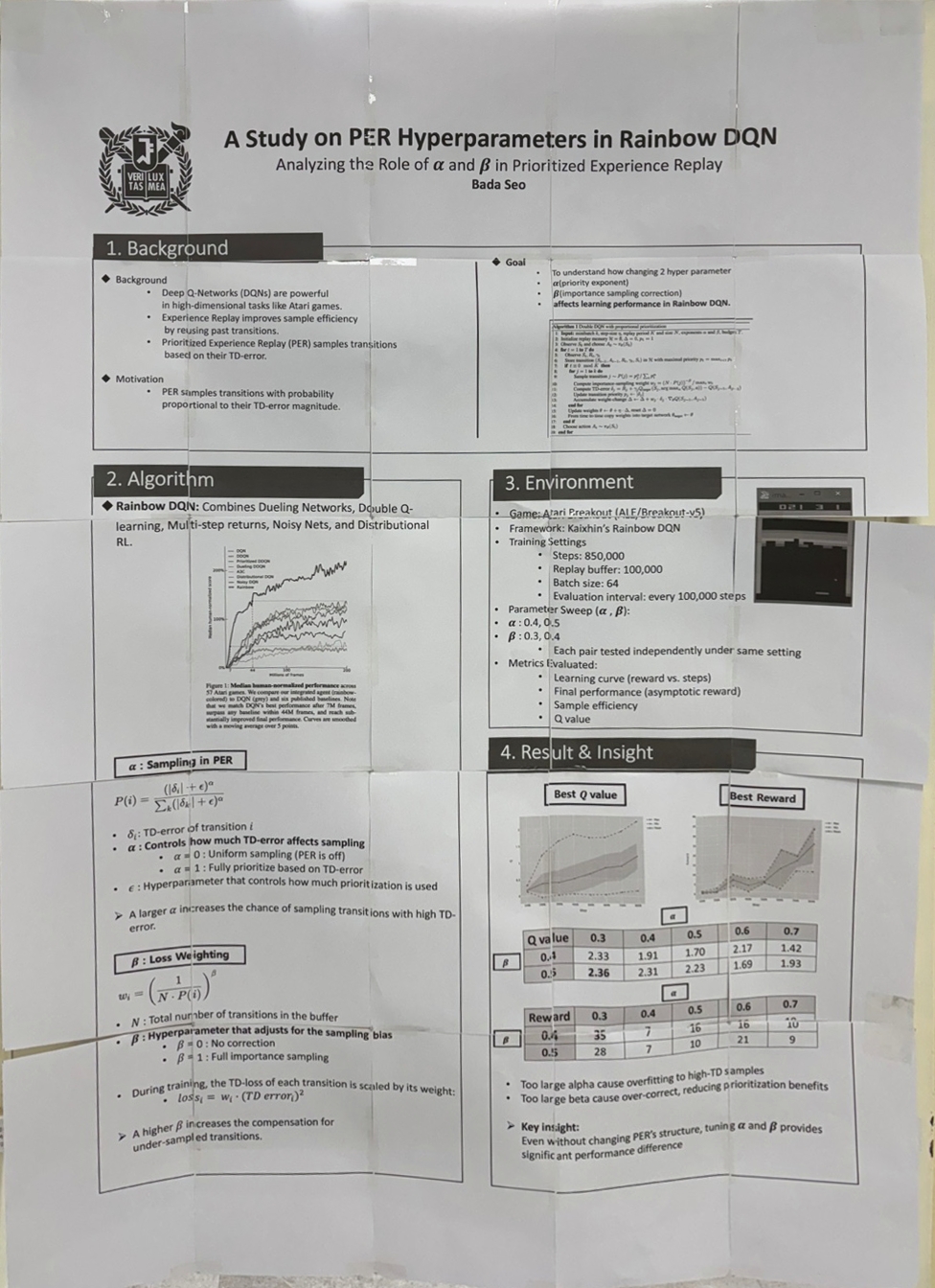

A Recency-Aware Prioritization Strategy for Experience Replay in Deep Q-Networks (서바다)

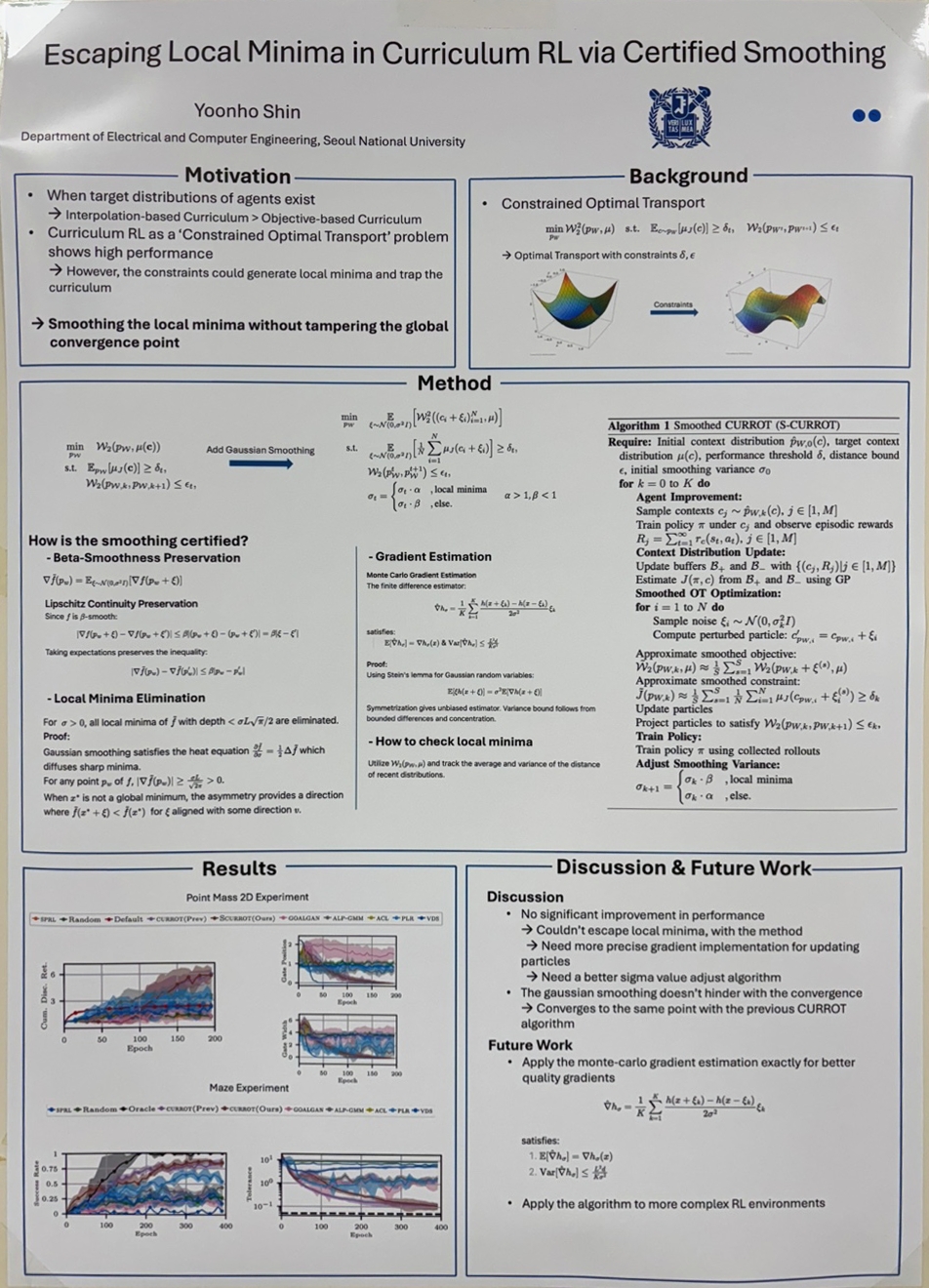

Escaping Local Minima in Optimal Transport Based Curriculum RL via Certified Smoothing (신윤호)

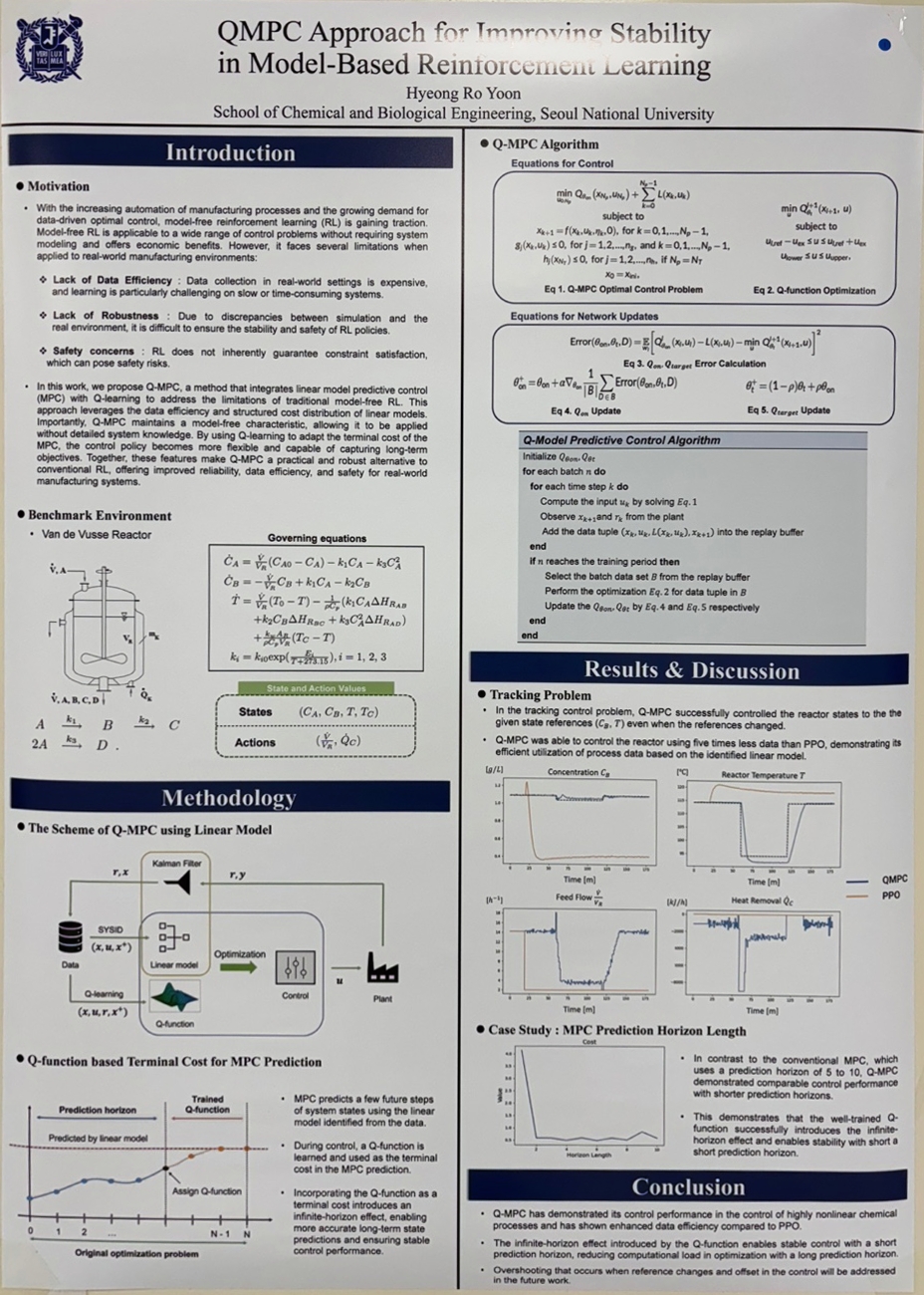

QMPC Approach for Improving Stability in Model-Based Reinforcement Learning (윤형로)

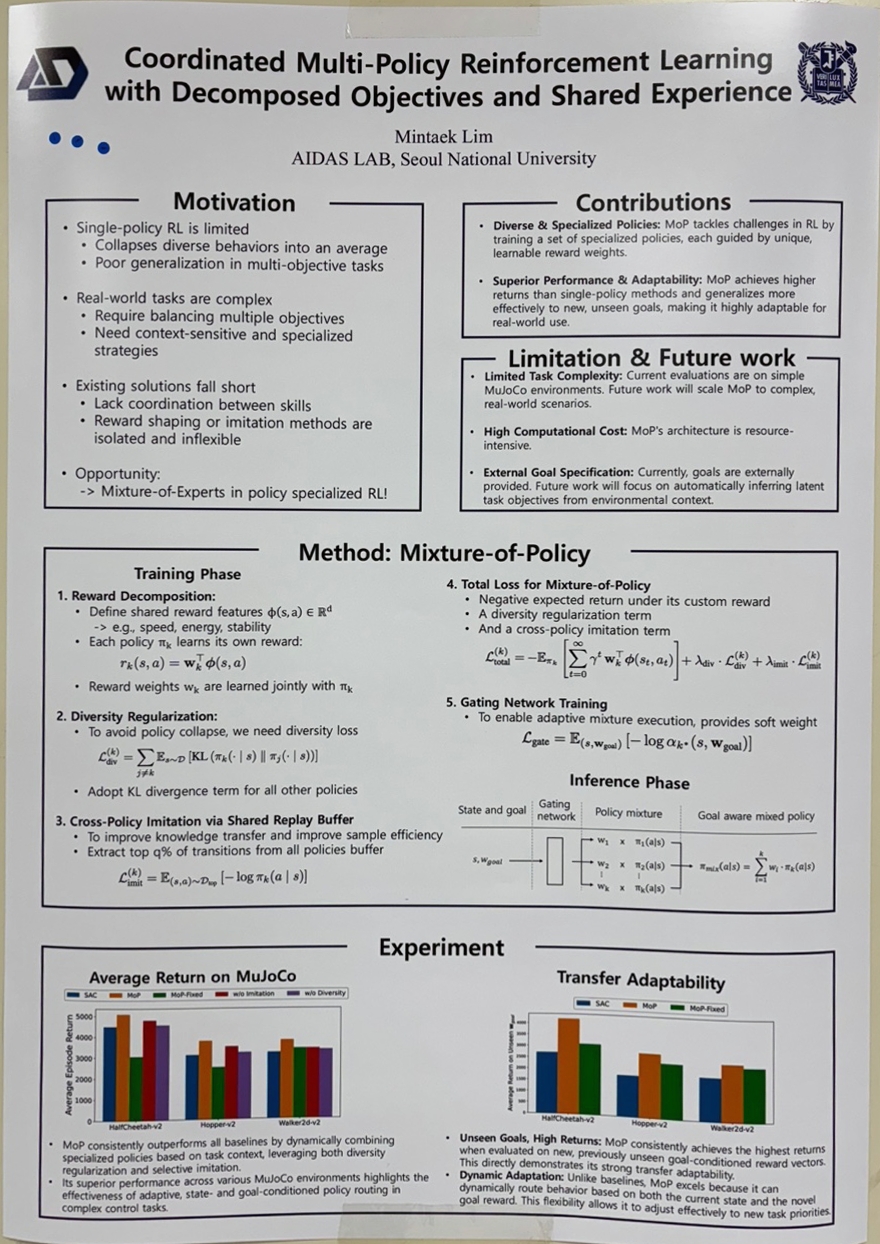

Coordinated Multi-Policy Reinforcement Learning with Decomposed Objectives and Shared Experience (임민택)

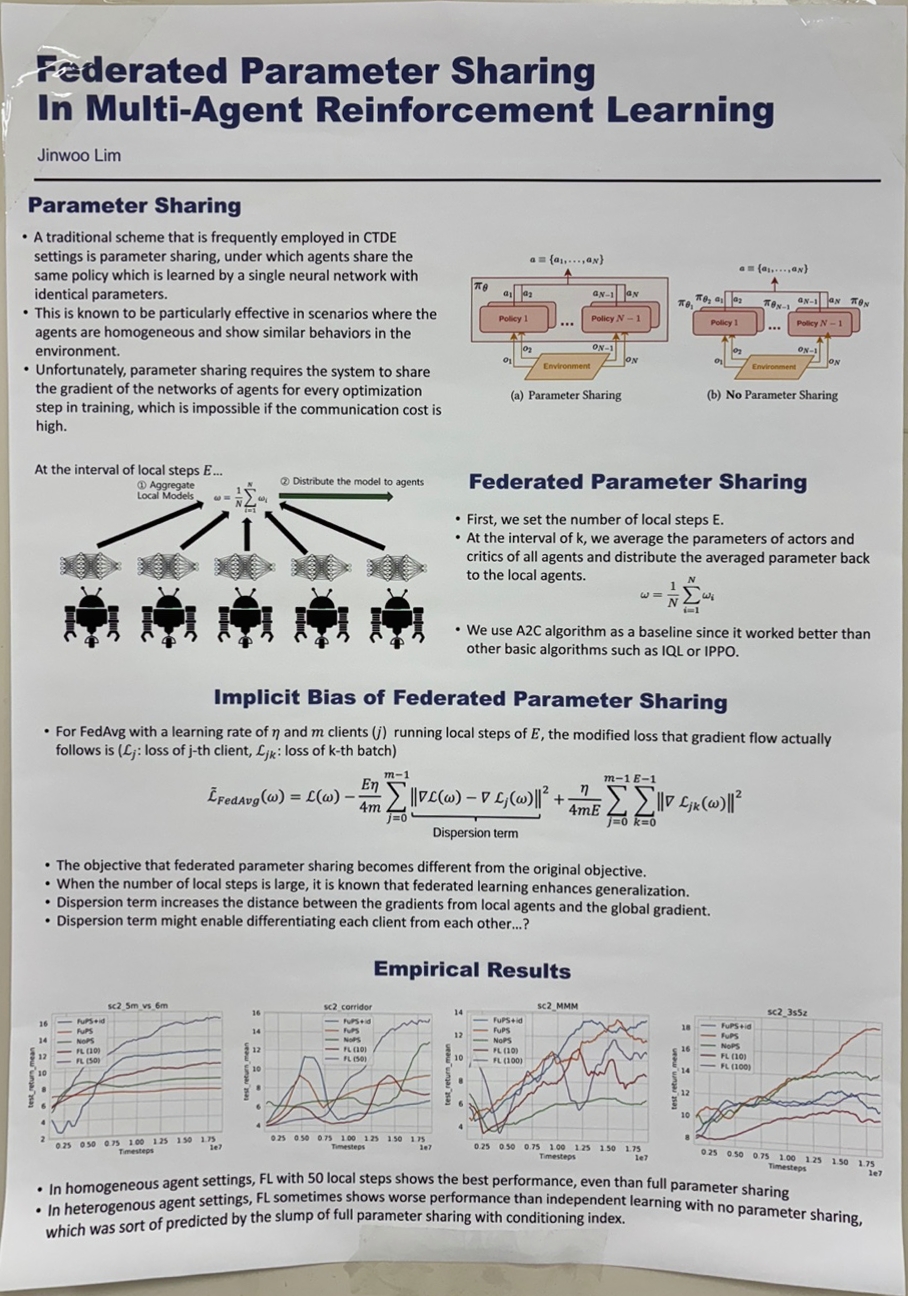

Federated Parameter Sharing In Multi-agent Reinforcement Learning (임진우)

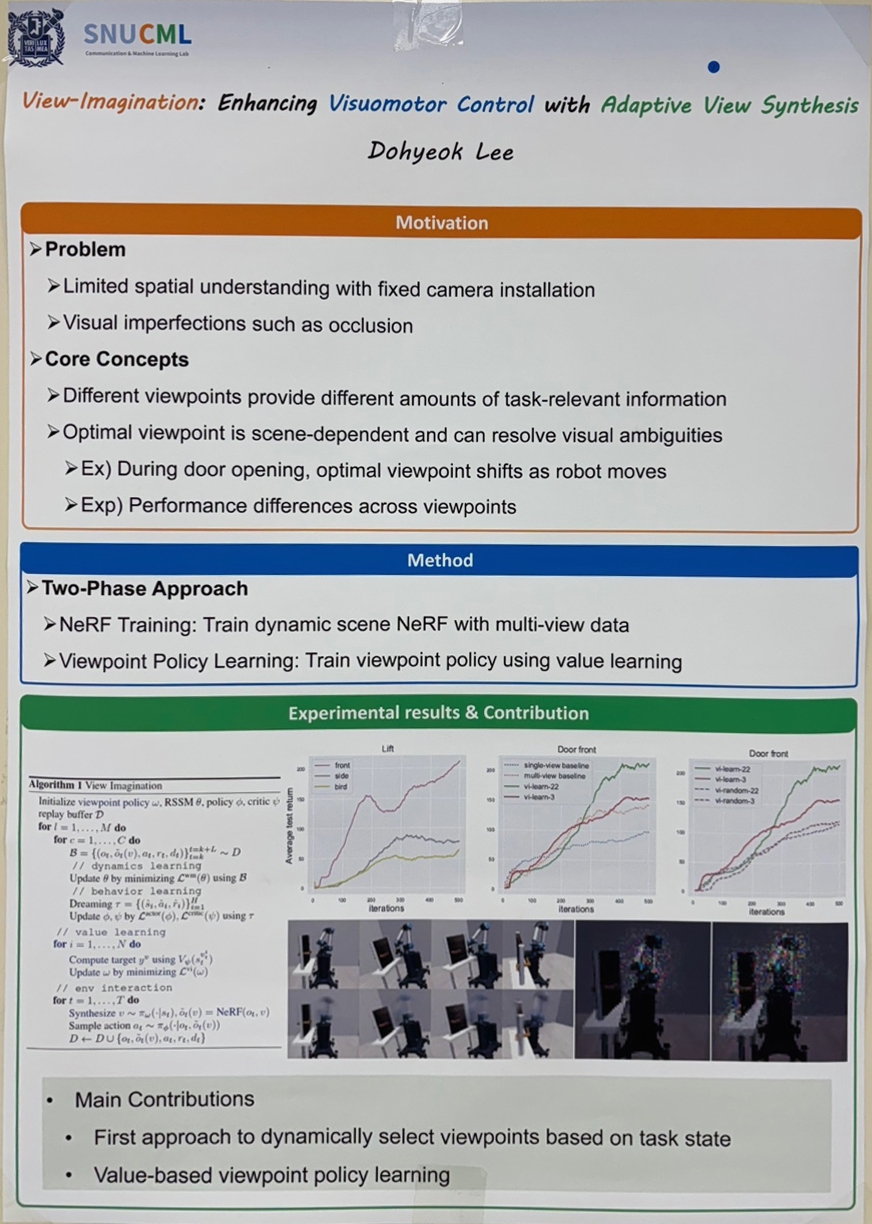

View-Imagination: Enhancing Visuomotor Control with Adaptive View Synthesis (이도혁)

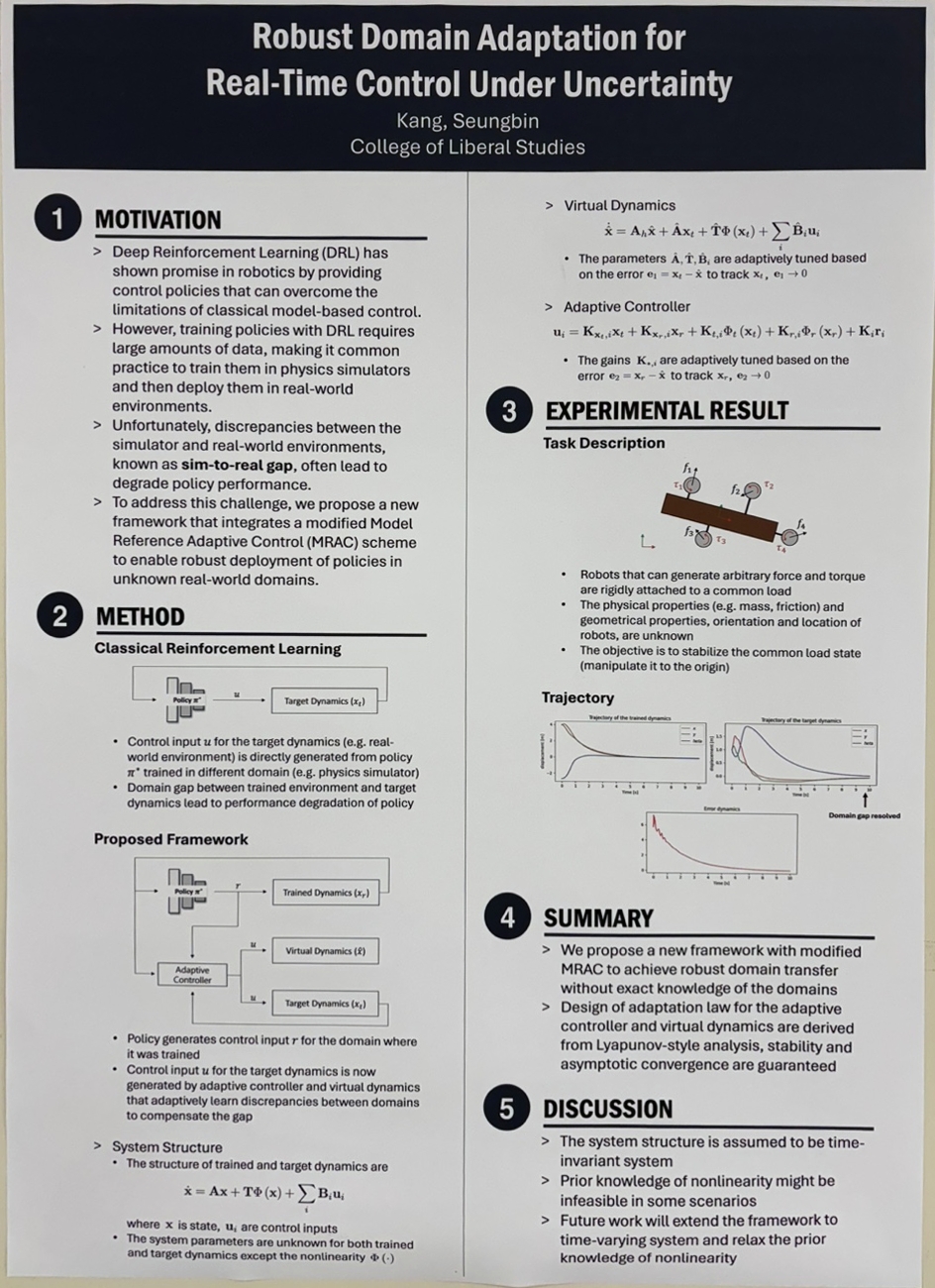

Online Domain Adaptation for Cooperative Space Debris Manipulation (강승빈)

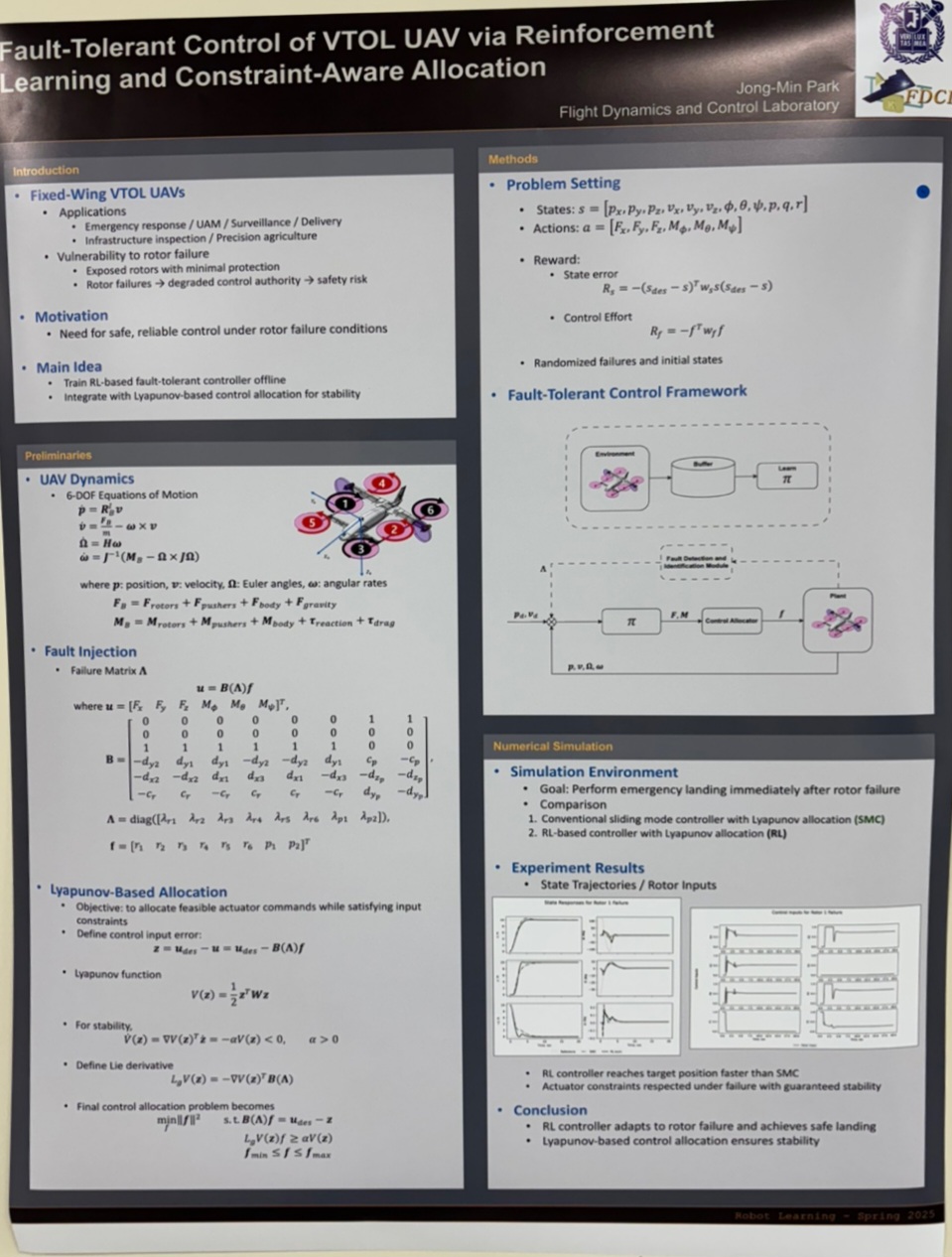

Fault-Tolerant Control of VTOL UAVs via Reinforcement Learning and Constraint-Aware Allocation (박종민)

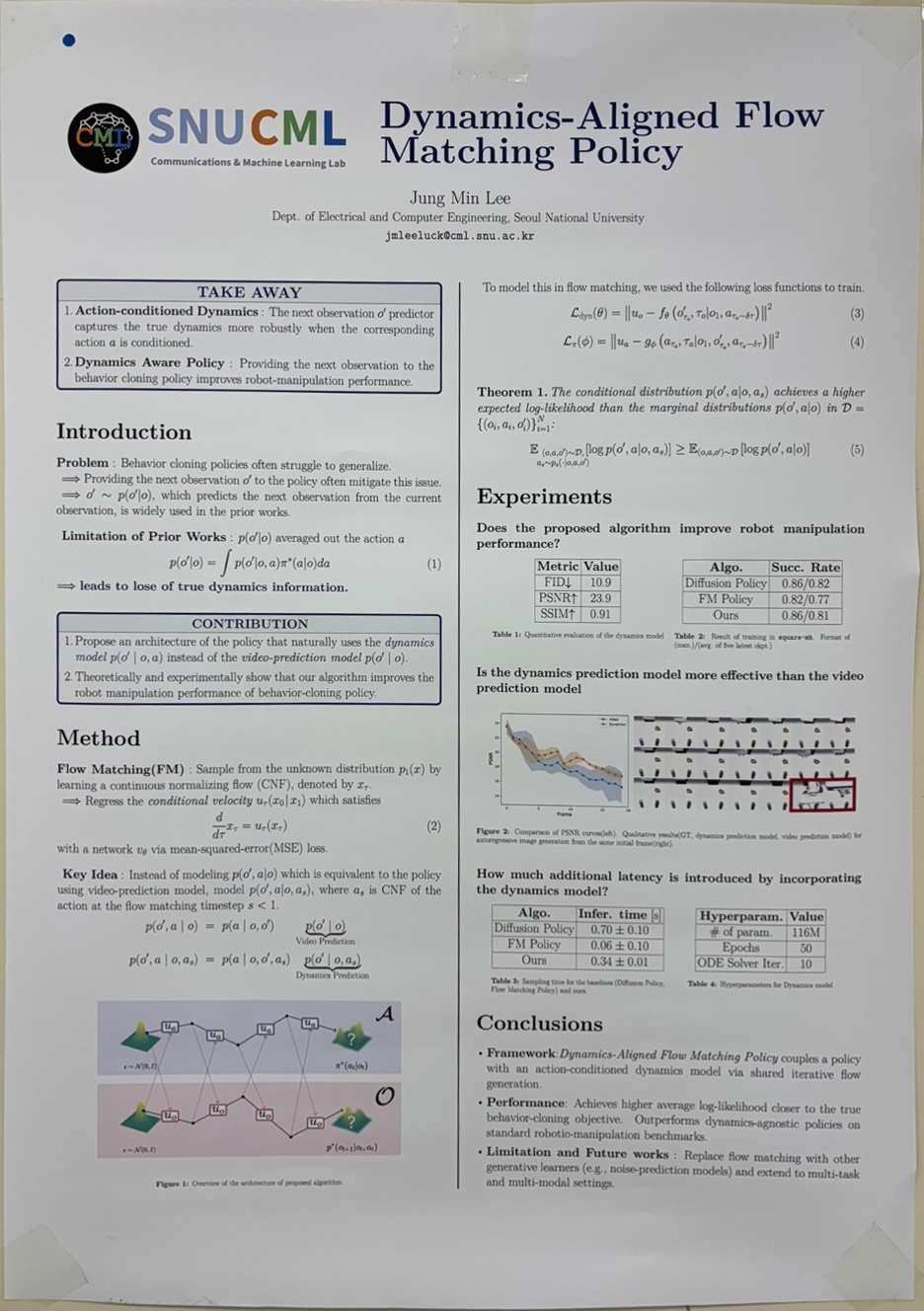

Dynamics-Aligned Flow Matching Policy for Robot Learning (이정민)

While recent approaches leverage video prediction models to implicitly capture dynamics, they lack explicit action conditioning, leading to averaged predictions over actions that obscure critical dynamics information. We propose a Dynamics-Aligned Flow Matching Policy that integrates dynamics prediction into policy learning through iterative flow generation. Our method introduces a novel architecture in which the policy and dynamics models share intermediate generation samples during training, enabling self-correction and improved generalization. We provide both theoretical analysis and empirical evidence demonstrating that our method achieves superior approximation performance compared to existing behavior cloning baselines.

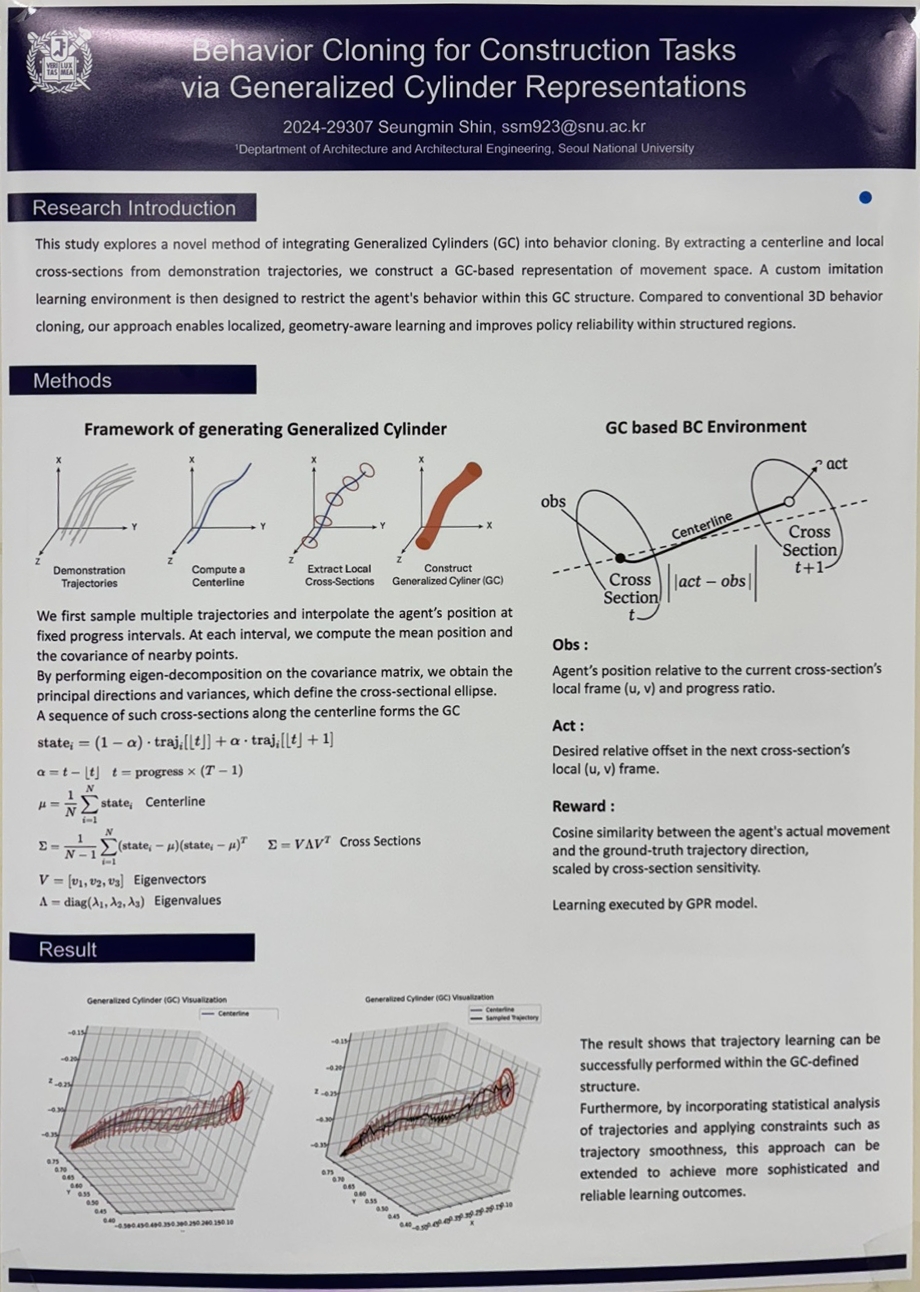

Behavior Cloning for Construction Tasks via Generalized Cylinder Representations (신승민)

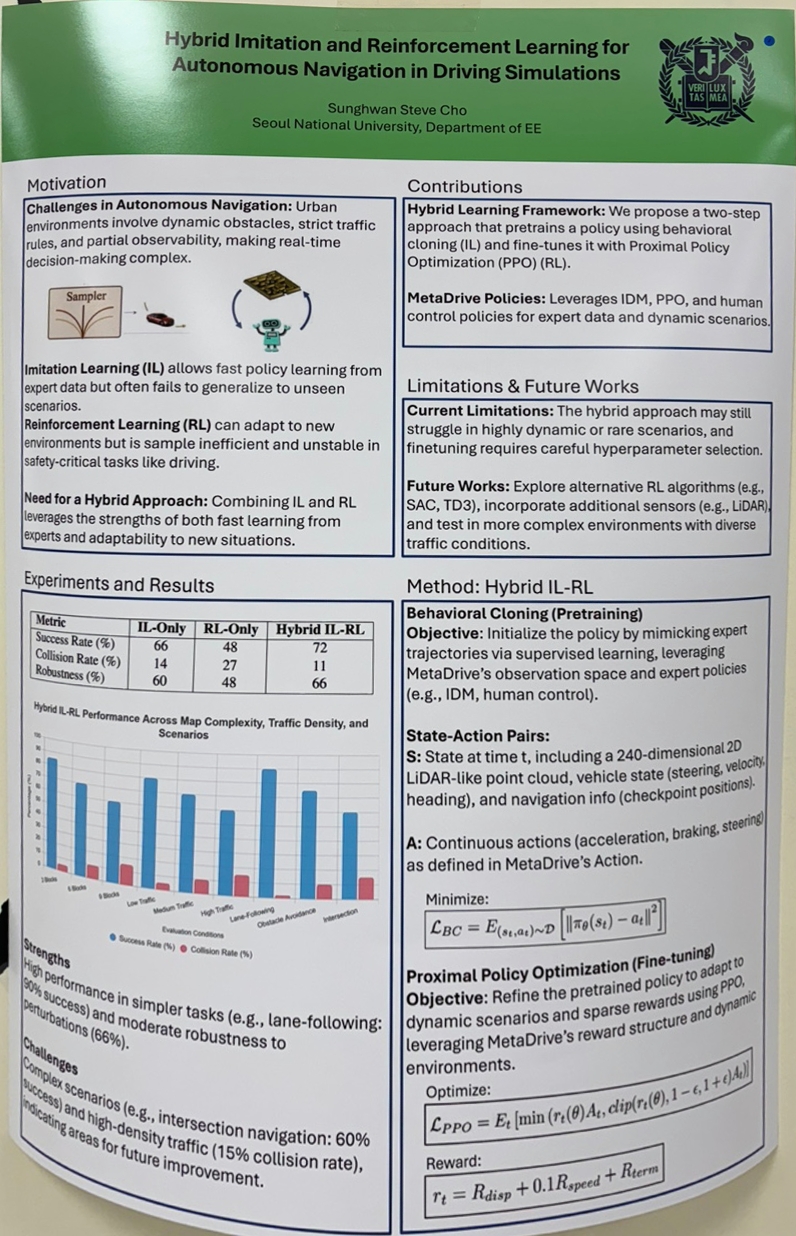

Hybrid Imitation and Reinforcement Learning for Autonomous Navigation in Realistic Driving Simulations (조성환)

2. RL for Robotics & AI Systems

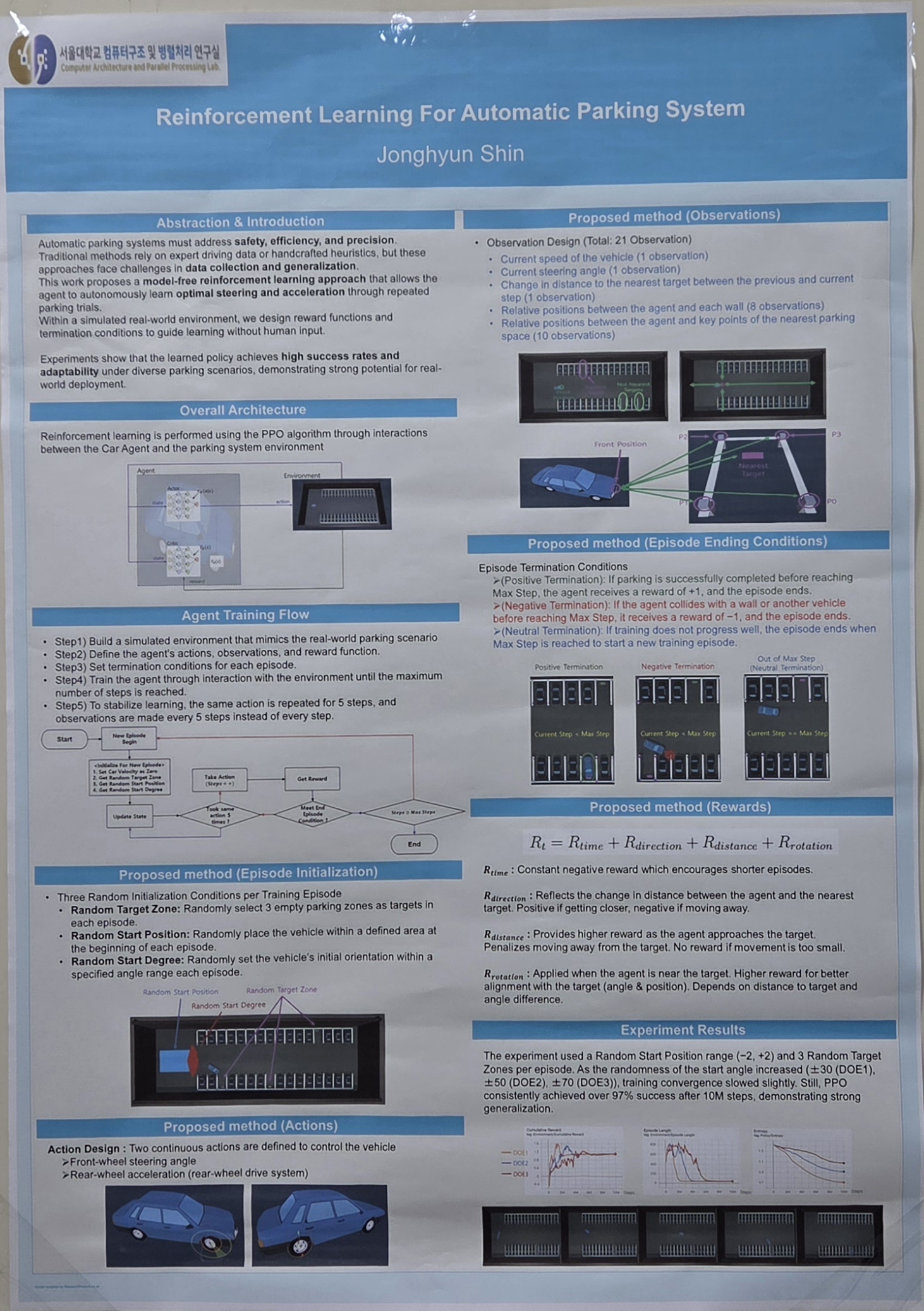

Reinforcement Learning For Automatic Parking System (신종현)

Force-Aware Deformable Object Manipulation with Diffusion policy (강승훈)

Reinforcement Learning-based Prior Map Localization Using Odometry Uncertainty (서형석)

For example, vision-based sensors may misrecognize different locations as the same place in environments with poor texture, low illumination, or repetitive patterns. Similarly, LiDAR sensors can struggle to accurately estimate position in environments with simple or repetitive structures due to matching errors. As such, the quality of observational data collected by the robot and the resulting localization performance can vary significantly depending on the viewpoint.

Previous studies on Uncertainty-Aware Model Predictive Control (UA-MPC) have quantitatively modeled odometry uncertainty based on viewpoint and used this to control the rotation direction of a motorized LiDAR, thereby improving odometry performance.

This study proposes a reinforcement learning-based viewpoint selection method that actively improves localization performance by leveraging uncertainty information associated with different viewpoints. Specifically, when the robot can steer its sensor in a certain direction, it computes the localization uncertainty for that viewpoint and uses an uncertainty-aware reward function to train a policy. The policy is trained using the Proximal Policy Optimization (PPO) algorithm, enabling the robot to autonomously choose directions that enhance localization performance.

This study focuses on LiDAR-based localization scenarios, with simulations conducted in the MARSIM environment. Experimental results show that the proposed RL-based viewpoint selection method significantly improves localization success rates compared to conventional approaches, especially in environments with repetitive or symmetrical structures where false loop closures are common. This research demonstrates the potential of active perception to enhance localization performance and lays the groundwork for future real-world applications and extensions to multi-sensor fusion.

Reward Refinement for High-Precision Hand-Object Trajectory Imitation (오우진)

Domain-Aware Offline Policy Learning for Service Robots in Diverse Real-World Environments (이승현)

Capturing a Multi-Modal Grasping Dataset with the Inspire and Allegro Hands (최민기)

Multi-Agent Reinforcement Learning for Multi-Object Tracking using Adaptive Proximal Policy Optimization (Hidayat Ignatio)

Reinforcement-Guided Subtask Decomposition for Unified Vision-Language Learning (김남기)

Meta Personalized Preference Learning for Adaptive Alignment of Large Language Models (김민성)

Data Augmentation through Neural Feature Randomization for Embodied Instruction Following (김병휘)

Continuous-Action Reinforcement Learning for Point-Cloud Registration with Curriculum-Driven SE(3) Exploration (김진용)

Confidence-Aware Expert-Mixed Reinforcement Learning for the Traveling Salesman Problem (변한준)

SALT: Sparsity-Aware Latency-Tunable LLM Inference Acceleration (장홍선)

However, their deployment in latency-sensitive environments remains challenging due to the quadratic complexity of the attention mechanism.

Recent work on sparse attention has shown promise in mitigating this bottleneck by attending to a subset of tokens, but existing methods typically rely on static sparsity patterns or fixed computational budgets, limiting their adaptability to varying runtime constraints.

In this project, we propose an adaptive sparse attention mechanism that dynamically adjusts the sparsity level during inference based on contextual signals. To this end, we formulate sparsity control as a sequential decision-making problem and leverage simple reinforcement learning (RL) to learn a policy that balances latency and accuracy in real time.

Our experiments demonstrate that determining not only the appropriate sparsity ratio but also the attention pattern for each layer based on runtime context is crucial. By evaluating our method on representative benchmarks, we show that adaptively adjusting the computational budget would enable more effective trade-offs between inference efficiency and output quality.

A Unified Reinforcement Learning Framework for Basic Block Instruction Scheduling (정창훈)

Hybrid Coverage Path Planning Using Boustrophedon Decomposition and Reinforcement Learning (한상규)

Coverage Path Planning (CPP) is a fundamental task in autonomous robotics, essential for applications such as cleaning, painting, and excavation. Classical methods like boustrophedon decomposition provide systematic and complete coverage in structured environments but often fail to adapt to local complexities or minimize traversal costs. Reinforcement Learning (RL), by contrast, offers data-driven adaptability but can suffer from sample inefficiency and poor generalization when applied in isolation.

This paper proposes a hybrid CPP framework that integrates boustrophedon decomposition for global map segmentation with local path refinement using deep RL. The RL component is explored through multiple algorithms including Deep Q-Networks (DQN), Proximal Policy Optimization (PPO), and Advantage Actor-Critic (A2C), each evaluated for efficiency and adaptability across different environment layouts.

Baseline comparisons are conducted against (1) standard boustrophedon planning, (2) a handcrafted heuristic local planner, and (3) standalone RL-based planners without decomposition. Experiments on benchmark grid maps with varying obstacle densities demonstrate that our hybrid approach achieves improved path efficiency, fewer turns, and reduced overlap, while maintaining full coverage. The results suggest that combining rule-based structure with learning-based adaptability enables more robust and context-aware coverage strategies for robotic systems.

Empowering LLMs with Strategic Tool Use via Reinforcement Learning (현시은)

Unlike previous methods that rely on static prompts or supervised training alone, our approach trains LLMs dynamically through interactive, feedback-driven episodes, allowing the model to autonomously learn optimal tool invocation policies.

We begin with supervised fine-tuning on curated reasoning examples augmented with tool use to establish a foundational understanding. Then, we apply Proximal Policy Optimization (PPO) to iteratively refine the model’s strategic reasoning through real-time interactions, substantially enhancing task-specific performance. Experimental evaluations on challenging benchmarks—including mathematical problem-solving (AIME) and scientific question answering (ARC Challenge)—show that LLMs trained with RL effectively self-correct, selectively use tools, and significantly outperform conventional models in both accuracy and reasoning robustness.

This work underscores the potential of combining reinforcement learning with targeted tool integration, paving the way for more accurate and reliable LLM applications in complex reasoning and real-world scenarios.

Isoform-aware long-read sequence alignment using deep reinforcement learning (황현서)

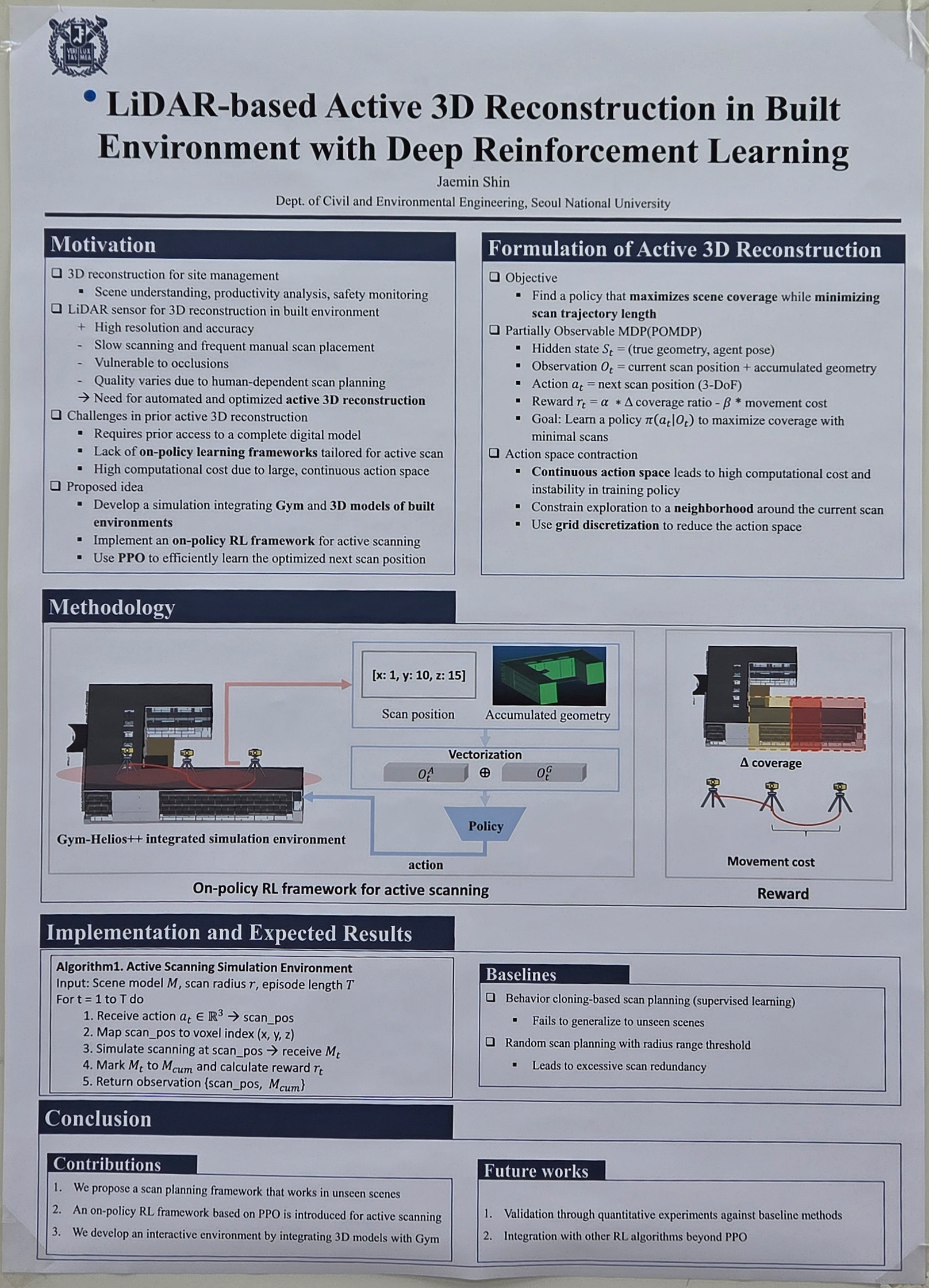

Vision-based Active 3D Reconstruction for Large-scale Scene with a Deep Reinforcement Learning (신제민)